“Deep Speech Factorization”版本间的差异

(以“=Title= Deep Factorization for Speech Signals =People= Dong Wang, Lantian Li, Zhiyuan Tang =Introduction= Various informative factors mixed in speech signals, le...”为内容创建页面) |

|||

| 第1行: | 第1行: | ||

| − | = | + | =Project name= |

Deep Factorization for Speech Signals | Deep Factorization for Speech Signals | ||

| − | = | + | =Project members= |

Dong Wang, Lantian Li, Zhiyuan Tang | Dong Wang, Lantian Li, Zhiyuan Tang | ||

2017年10月30日 (一) 00:49的版本

Project name

Deep Factorization for Speech Signals

Project members

Dong Wang, Lantian Li, Zhiyuan Tang

Introduction

Various informative factors mixed in speech signals, leading to great difficulty when decoding any of the factors. Here informative factors we mean task-oriented factors, e.g., linguistic content, speaker identity and emotion factor, rather than factors of physical models, e.g., excitation and modulation of the traditional source-filter model.

A natural idea is that, if we can factorize speech signals into individual information factors, all the speech

signal processing tasks will be greatly simplified. However, as expected, this factorization is highly difficult,

due to at least two reasons: firstly, the mechanism that various factors mixed together is far from clear to us,

the only thing we know right now is it is rather complex; secondly, if some typical factors are short-time identifiable

is also far from known, e.g., speaker traits. That is to say, we essentially do not know if speech signals are short-time

factorizable, until recently we found a deep speaker feature learning approach recently[1].

The discovery that speech signals are short-time factorizable is important, and it opens a door to a new paradigm of speech research. This project follows this direction and attempts to lay down the foundation of this new science.

Speaker feature learning

The discovery of the short-time property of speaker traits is the key step towards speech signal factorization, as the speaker trait is one of the two main factors: the other is linguistic content that we have known for a long time being short-time patterns.

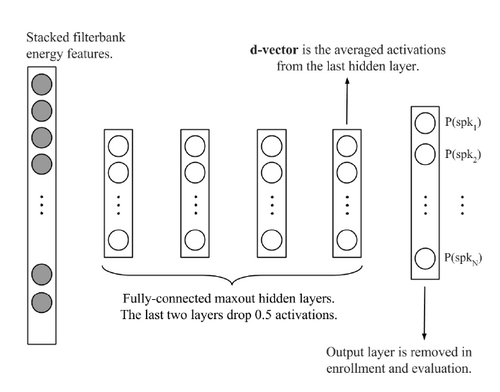

The key idea of speaker feature learning is simply based on the idea of discriminating training speakers based on short-time frames by deep neural networks (DNN), date back to 2014 by Ehsan et al.[2]. As shown below, the output of the DNN involves the training speakers, and the frame-level speaker features are read from the last hidden layer. The basic assumption here is: if the output of the last hidden layer can be used as the input feature of the last hidden layer (a software regression classifier), these features should be speaker discriminative.

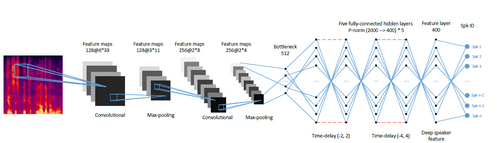

However, the vanilla structure of Ehsan et al. performs rather poor compared to the i-vector counterpart. One reason is that the simple back-end scoring is based on average to derive the utterance-based representations (called d-vectors) , but another reason is the vanilla DNN structure that does not consider much of the context and pattern learning. We therefore proposed a CT-DNN model that can learn stronger speaker features. The structure is shown below[1]:

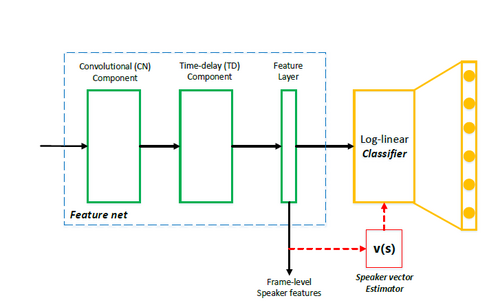

Recently, we found that an 'all-info' training is effective for learning features. Looking back to DNN and CT-DNN, although the features

read from last hidden layer are discriminative, but not 'all discriminative', because some discriminant info can be also impelemented

in the last affine layer. A better strategy is let the feature generation net (feature net) learns all the things of discrimination.

To achieve this, we discarded the parametric classifier (the last affine layer) and use the simple cosine distance to conduct the

classification. An iterative training scheme can be used to implement this idea, that is, after each epoch, averaging the speaker

features to derive speaker vectors, and then use the speaker vectors to replace the last hidden layer. The training will be then

taken as usual. The new structure is as follows:

Reference

[1] Lantian Li, Yixiang Chen, Ying Shi, Zhiyuan Tang, and Dong Wang, “Deep speaker feature learning for text-independent speaker verification,”, Interspeech 2017.

[2] Ehsan Variani, Xin Lei, Erik McDermott, Ignacio Lopez Moreno, and Javier Gonzalez-Dominguez, “Deep neural networks for small footprint text-dependent speaker verification,”, ICASSP 2014.