“OLR Challenge 2020”版本间的差异

| 第107行: | 第107行: | ||

=Ranking list= | =Ranking list= | ||

| + | |||

The Oriental Language Recognition (OLR) Challenge 2020, co-organized by Xiamen University, CSLT@Tsinghua University, Duke-Kunshan University, Northwestern Polytechnical University and Speechocean, was completed with a great success. | The Oriental Language Recognition (OLR) Challenge 2020, co-organized by Xiamen University, CSLT@Tsinghua University, Duke-Kunshan University, Northwestern Polytechnical University and Speechocean, was completed with a great success. | ||

The results have been published in the APSIPA ASC, Nov 18-21, 2019, Lanzhou, China. | The results have been published in the APSIPA ASC, Nov 18-21, 2019, Lanzhou, China. | ||

| 第121行: | 第122行: | ||

=== Task 1 === | === Task 1 === | ||

| − | [[文件: | + | [[文件:task1-1.png | 600px]] |

| − | [[文件: | + | [[文件:Task1-1.png | 800px]] |

2020年12月19日 (六) 06:57的版本

目录

Oriental Language Recognition (OLR) 2020 Challenge

Oriental languages involve interesting specialties. The OLR challenge series aim at boosting language recognition technology for oriental languages. Following the success of OLR Challenge 2016, OLR Challenge 2017, OLR Challenge 2018 and OLR Challenge 2019, the new challenge in 2020 follows the same theme, but sets up more challenging tasks in the sense of:

- Task 1: cross-channel LID is a close-set identification task, which means the language of each utterance is among the known traditional 6 target languages, but utterances were recorded with different channels.

- Task 2: dialect identification is a open-set identification task, in which three nontarget languages are added to the test set with the three target dialects.

- Task 3: noisy LID, where noisy test data of the 5 target languages will be provided.

We will publish the results on a special session of APSIPA ASC 2020.

News

- Jun. 1, challenge registration open.

- Jun. 8, evaluation plan release and AP20-OLR training/dev data release.

Data

The challenge is based on two multilingual databases, AP16-OL7 that was designed for the OLR challenge 2016, and AP17-OL3 database that was designed for the OLR challenge 2017. For AP20-OLR, a standard test set AP20-OLR-test is also provided.

AP16-OL7 is provided by Speechocean (www.speechocean.com), and AP17-OL3 is provided by Tsinghua University, Northwest Minzu University and Xinjiang University, under the M2ASR project supported by NSFC.

The features for AP16-OL7 involve:

- Mobile channel

- 7 languages in total

- 71 hours of speech signals in total

- Transcriptions and lexica are provided

- The data profile is here

- The License for the data is here

The features for AP17-OL3 involve:

- Mobile channel

- 3 languages in total

- Tibetan provided by Prof. Guanyu Li@Northwest Minzu Univ.

- Uyghur and Kazak provided by Prof. Askar Hamdulla@Xinjiang University.

- 35 hours of speech signals in total

- Transcriptions and lexica are provided

- The data profile is here

- The License for the data is here

AP20-OLR-test is provided for the test of the 3 tasks respectively:

- Task 1: AP20-OLR-channel-test: This subset is designed for the cross-channel LID task, which contains six of the ten target languages, but was recorded with different recording equipments and environment.

- Task 2: AP20-OLR-dialect-test: This subset is designed for the dialect identification task, including three dialects which are Hokkien, Sichuanese and Shanghainese.

- Task 3: AP20-OLR-noisy-test: This subset is designed for the noisy LID task, which contains five of the ten target languages, but was recorded under noisy environment (low SNR).

Evaluation plan

Refer to the following paper:

Zheng Li, Miao Zhao, Qingyang Hong, Lin Li, Zhiyuan Tang, Dong Wang, Liming Song and Cheng Yang: AP20-OLR Challenge: Three Tasks and Their Baselines, submitted to APSIPA ASC 2020.pdf

Evaluation tools

- The Kaldi and Pytorch recipes for baselines. [1]

Participation rules

- Participants from both academy and industry are welcome

- Publications based on the data provided by the challenge should cite the following paper:

Dong Wang, Lantian Li, Difei Tang, Qing Chen, AP16-OL7: a multilingual database for oriental languages and a language recognition baseline, APSIPA ASC 2016. pdf

Zhiyuan Tang, Dong Wang, Yixiang Chen, Qing Chen: AP17-OLR Challenge: Data, Plan, and Baseline, APSIPA ASC 2017. pdf

Zhiyuan Tang, Dong Wang, Qing Chen: AP18-OLR Challenge: Three Tasks and Their Baselines, submitted to APSIPA ASC 2018. pdf

Zhiyuan Tang, Dong Wang, Liming Song: AP19-OLR Challenge: Three Tasks and Their Baselines, submitted to APSIPA ASC 2019. pdf

Zheng Li, Miao Zhao, Qingyang Hong, Lin Li, Zhiyuan Tang, Dong Wang, Liming Song and Cheng Yang: AP20-OLR Challenge: Three Tasks and Their Baselines, submitted to APSIPA ASC 2020. pdf

Important dates

- Jun. 1, AP20-OLR training/dev data release.

- Oct. 1, register deadline.

- Oct. 20, test data release.

- Nov. 1, 24:00, Beijing time, submission deadline.

- Nov. 27, convening of seminar.

- Dec. 10, results announcement.

(Due to the COVID-19, the seminar and award ceremony will be adjusted according to the actual situation.)

Registration procedure

If you intend to participate the challenge, or if you have any questions, comments or suggestions about the challenge, please send email to the organizers ( ap_olr@163.com). For participants, the following information is required, also please sign the Data License Agreement on behalf of an organization/company of speech research/technology, and send back the scanned copy by email.

- Team Name: - Institute: - Participants: - Duty person: - Hompage or published papers in speech field of person/organization/company:

Organization Committee

- Qingyang Hong, Xiamen University [home]

- Lin Li, Xiamen University [home]

- Zheng Li, Xiamen University

- Dong Wang, Tsinghua University [home]

- Zhiyuan Tang, Tsinghua University [home]

- Ming Li, Duke-Kunshan University

- Xiaolei Zhang, NWPU

- Liming Song, Speechocean

- Cheng Yang, Speechocean

Ranking list

The Oriental Language Recognition (OLR) Challenge 2020, co-organized by Xiamen University, CSLT@Tsinghua University, Duke-Kunshan University, Northwestern Polytechnical University and Speechocean, was completed with a great success. The results have been published in the APSIPA ASC, Nov 18-21, 2019, Lanzhou, China.

Overview

There are totally 58 teams that registered this challenge. Until the deadline of submission, 20+ teams submitted their results. The submissions have been ranked in terms of the 3 language recognition tasks respectively, one is cross-channel LID, the second one is open-set dialect identification, and the third one is Noisy LID. We just present team information of the top 10 ones.

More details and history about the challenge, see slides.

Task 1

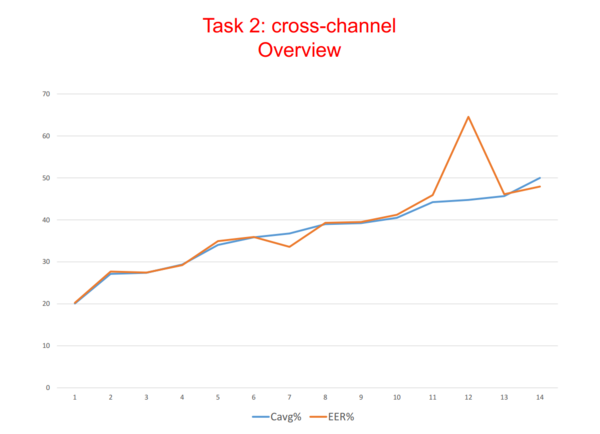

Task 2

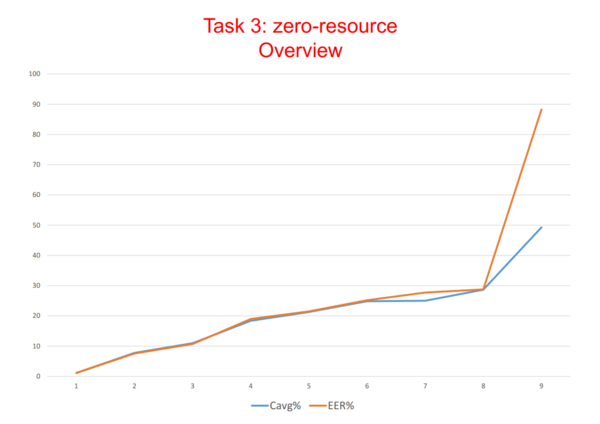

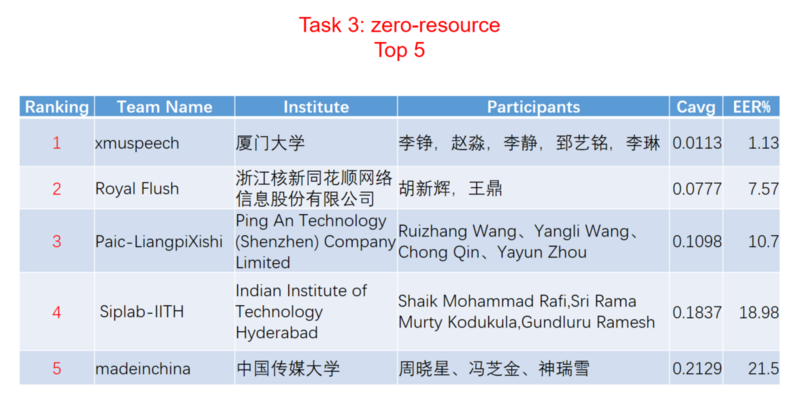

Task 3

Top system description

- Descriptions (in Chinese) from Innovem.

- Descriptions from xmuspeech.

- Descriptions from SSLab.